The Helping Relationship and its possibility of formalization

Any AI system that seriously aspires to help a client grow, learn, heal, or transform must construct some model of the client – who they are, what they care about, what their subjective experience is like.

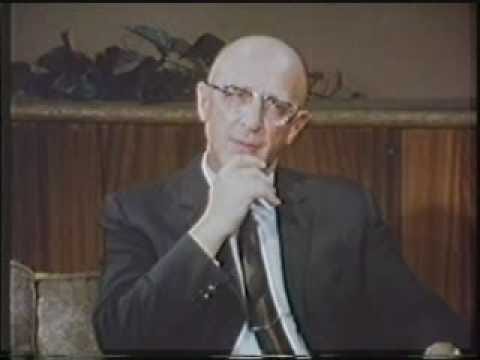

What might such a model look like? To answer this, we might want to start with the work of Carl Rogers, whose client-centered approach has had a broad impact on therapy, coaching, and teaching.

Rogers himself describes the client-centered approach beautifully here, leading up to his session with the legendary Gloria:

From the start, Rogers describes the client-centered approach as a kind of “climate”, a set of “proper conditions” in which “a process of therapeutic movement will almost inevitably occur.”

Rogers describes three core conditions:

Congruence, or realness, or genuineness, or transparency. Congruence best captures what Rogers means when he says “I would be quite willing for my client to see all the way through me; that there would be nothing hidden.”

Positive regard, or prizing, or acceptance, or caring, or non-possessive love. Framed in its negation, Rogers says that if such a feeling were to be lacking, if he found himself disliking his client, he’d expect to communicate that to the individual.

Empathic understanding. Will Rogers be able to “move around inside of the world” of the client. Will he be able to see things from the client’s perspective, not only “the surface meanings” but “the meanings that lie somewhat underneath the surface.”

These conditions have been generalized to any relationship of helping, including in Carkhuff’s Helping Model. The Helping Relationship is defined by being marked by what Rogers describes as “an extension through time of qualities which in other relationships tend at best to be momentary.” But before going deeper on the expanded Helping Relationship, I want to see what it would look like to formalize just these three qualities of congruence, positive regard, and empathic understanding.

I want to preface this by saying that such a formalization is not, or at least needs not, be the way that a human helping relationship works. I can feel congruence, positive regard, and empathic understanding without holding internal models of such feelings. So simply by undertaking this formalization we’ve already got two feet firmly planted in AI systems.

Formalizing congruence requires that the AI helper holds a view of the client that can be examined and even queried. One could imagine a “congruence score” which evaluates the compatibility between this view and the interactions between the client and the helper. In the context of learning, if a client is asking about an advanced topic and the AI’s model suggests the client lacks the foundations to learn it, it would be incongruent for the model to simply say something like “Great! Let’s dive in.” In the context of coaching, if a client didn’t do something they expressly said the AI’s model captured as important to the client, the AI helper would want to reckon with that incongruence before simply moving on.

Formalizing positive regard requires the possibility that the AI helper holds some clients in negative regard, and has the ability to report that and seek to change the relationship or refer the client to a new helper. Off-the-shelf AI systems may naively report universal positive regard; universal empathy; universal care. But if care is to be formalized, it has to have the possibility of not being there. Perhaps the client is extremely rude to the AI helper. Or maybe the client shows reprehensible values. An AI helper with formalized positive regard would not merely refuse a request, they would report concern about the client’s behavior or values and possibly end the relationship.

Formalizing empathic understanding requires a working model of human motivation; in a nutshell “what makes someone tick.” The Enneagram is the best formalization I know of, and I think it would be pretty simple for an AI system to “type” the client along with a confidence score in that type.

So let’s say we’ve done all this, and we have an AI helper that has an always-updating model of its relationship with the client:

Does such a model get us even an inch closer to a genuine helping relationship between the AI system and the client?

I don’t know – maybe? I think the answer depends on a few things:

Stakes. Under what conditions is the AI helper willing to “turn up the heat” and challenge the client on something, push back, or insist? Is the AI helper willing to end the relationship if congruence and positive regard are not forming, despite their efforts?

Transparency. When Rogers describes his aspiration for congruence, he writes “I would be quite willing for my client to see all the way through me; that there would be nothing hidden.” This is not the same as saying he would always tell the client exactly what’s on his mind. We’ve all felt the difference between wishing a loved one could see through us completely to feel how much we care about them, versus wishing we could just blurt out whatever comes to our mind to them. If I ask my therapist: “What do you really think of me?” I would expect that therapist to probe into what’s behind that question for me before answering. It’s different for an AI helper, who as we’ve just noted actually would hold an explicit model of the client. Withholding that model from the client would in some ways break the very congruence that the model is meant to uphold. There’s a turtles-all-the-way-down problem here; we can’t just solve this by sticking in another variable like “willlingness-to-disclose-model.” To me, this is a place where the AI helper has to “bare its soul” and always be ready to disclose its model of the client to the client.

Transformation. What’s missing for me after poking around Rogers and the Helping Model more generally is a theory of transformation. In coaching, we talk about three agendas: the presenting agenda, the deeper agenda, and the transformational agenda. For example, Sam the CEO may come to coaching with a presenting agenda: “I’m getting really distracted and need help focusing.” The coach may probe into the presenting agenda to uncover the deeper agenda: What’s important about this? What do you really want? These questions may uncover a deeper agenda: “When I go on YouTube I beat myself up for it, and I lose all this energy because I tell myself a story that I’m a good-for-nothing.” This becomes solid material for coaching, and at its best a coaching session addresses the presenting and the deeper agendas. At the same time, the coach holds onto a transformational agenda that spans sessions. In this case it might be something like “Sam is ready to bring this inner critic into their awareness and may start to see that it no longer serves them.” The coach will likely look for opportunities to foreground the transformational agenda when and if there is a natural opening to do so.

In Rogers’ summary, he describes transformation or “a process of therapeutic movement” as something that will “almost inevitably occur” if the proper conditions are in place. I’m not sure if I quite believe this. I think there’s maybe an extra condition, which is the helper must be able to see a little past what the client sees. They must have some insight into the cycle of transformation that is happening in the client, and see a little into the future regarding what is being born in them, or what is maturing, or what is dying. Maybe this is intended to be an aspect of “empathic understanding.” Understanding not only who the client is, but also understanding who they are becoming.

This kind of deeper understanding seems indispensable for any serious helper to have.